Microsoft Kills Kinect

Microsoft Stops Manufacturing Kinect

No more a rumour. Microsoft is finally admitting that is not going to continue manufacturing its Kinect 3d, depth camera.

Since its introduction in November 2010 Microsoft sold around 35 million units of its Microsoft’s Kinect for Xbox 360 becoming the fastest-selling consumer device back in 2011 according to the Guinness World Records.

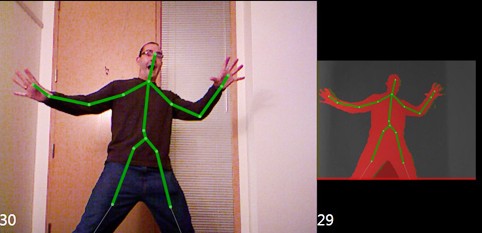

Since introduced, the Kinect become a preferred tool for developers creating body motion experiences that tracked body poses and movement by sensing the depth.

The technology behind the original Microsoft Kinect was developed by the Israeli based PrimeSense, a company that was subsequently acquired by Apple in 2013.

Interesting to note that the core of Apple’s newest iPhone X is basically the miniaturized version of PrimeSense’s original technology.

According to recent announcements, Microsoft will continue supporting Kinect for customers on Xbox, but ongoing developer tools remain unclear.

Microsoft shared the news with Co.Design in exclusive interviews with Alex Kipman, creator of the Kinect, and Matthew Lapsen, GM of Xbox Devices Marketing as stated in Mark Wilson’s post.

Furthermore, according to this post, Kinect’s team of specialists have gone on to build essential Microsoft technologies, including the Cortana voice assistant, the Windows Hello biometric facial ID system, and a context-aware user interface for the future that Microsoft dubs Gaze, Gesture, and Voice (GGV).

[Source Image: Microsoft]

Launched in 2010 with a $500 million marketing campaign, the Kinect painted a room with a multitude of invisible, infrared dots, mapping it in 3D space and allowing unprecedented tracking of the human body. The Kinect seemed perfect for getting gamers off the couch. Why press a button to duck, when you can just duck? It also enabled handy voice commands, when they worked, like “Xbox On” to turn on the Xbox One console.

As one of the first journalists to try Kinect back in 2010, though, it was immediately apparent to me that Kinect was a lot more important than what was then popularly framed as Microsoft’s Nintendo Wii killer. (Remember the Nintendo Wii?!?) It was Microsoft’s greater attempt to blur the line between the human body and the human interface–beyond the existing limitations of keyboards, mice, and even touch screens.

It is not an exaggeration to say that Kinect has been the single most influential, or at least prescient, piece of hardware outside of the iPhone. Technologically, it was the first consumer-grade device to ship with machine learning at its core, according to Microsoft. Functionally, it’s been mimicked, too. Since 2010, Apple introduced the Siri voice assistant copying the speak-to-control functions of Kinect, and Google started its own 3D tracking system, called Project Tango (which was founded and continues to be led by Johnny Lee, who helped on the original Kinect). Vision and voice systems have become nearly ubiquitous in smartphones, and they’re gradually taking over homes, too. Take Amazon Echo bringing voice assistants to our grandparents’ living rooms–or the newer, Echo Show upping the ante by adding a camera to Alexa. Even the networked Nest Cam owes a debt to the Kinect being first through the gate, and taking the brunt of criticism on a whole new era of privacy concerns.

“When we introduced Xbox One, we designed it to have the best experience with the Kinect. That was our goal with the Xbox One launch,” says Lapsen. “And like all product launches, you monitor that over time, you learn and adjust.” In practice, the Xbox’s target demo cared more about a few extra polygons than some new paradigm in human-computer interaction. So Microsoft decided to invest its talents in other products.

But Levin, and other researchers like him, adored the Kinect for its forward-looking technologies. “The important thing about Kinect is it showed you could have an inexpensive depth camera. And it supported the development of thousands of applications that used depth sensing,” Levin says. He points out that it was literally Microsoft Kinect hardware that made it possible for a startup like Faceshift to exist. Built to perform extremely 3D tracking of the human face that’s suitable for biometric security, Apple acquired Faceshift to replace its thumbprint scans.

And, as stated before, to take advantage of the technology, Apple essentially built a Kinect clone right into the iPhone X, having acquired PrimeSense in 2013, the Israeli company that developed 3D tracking technology that Microsoft licensed for the first Kinect.

“That’s one of thousands of applications Kinect made possible,” Levin continues. “Not to mention its immense impact on computer research, robotics research and interactive media arts, which is my field.” Some of Levin’s own students used Kinect to make a documentary in pointillist 3D–and then, expanded the technique to create an interactive film inside a real NYC subway car. Today, Levin points out that there are other depth-sensing cameras on the market, aside from the hackable, standalone Kinect. But he also adds that, to all the museums which feature Kinect-powered interactive art in their exhibitions? They might want to go on eBay and buy a few backups. (I suspect that Levin himself is making a trip to eBay today, too.)

But the depth cameras are here to stay. Companies such as Lusens, 4dHealthScience and others have found ways of developing their own 3d body motion tracking technology which along with AI driven software allowed them creating most amazing, engaging and mesmerizing applications.

“People say I invented it,” says Kipman. “I didn’t invent Kinect. I went through this table and identified the easiest opportunity.”

Over time, it got a lot better. It began to see more–from a mere 50-degree field of view, to 80 degrees, to 120 degrees in the V4 sensor used in the Hololens. It also, crucially, began to use less power: from 50W, to 25W, to a 1.5W peak today.

These steady improvements allowed Kinect to be miniaturized into something small enough that we could wear it. And on that matrix? “We went to the right, and one down,” says Kipman. “We said, we kinda know how to do simple 1X1 problem, now it’s time to get more ambitious.” So in 2015, Microsoft announced the Hololens. The 10×10 problem: Environmental output. That means Hololens could see not just a person, but space. And it could not just recognize this space, but allow people to output things into that space–dragging and dropping holograms.